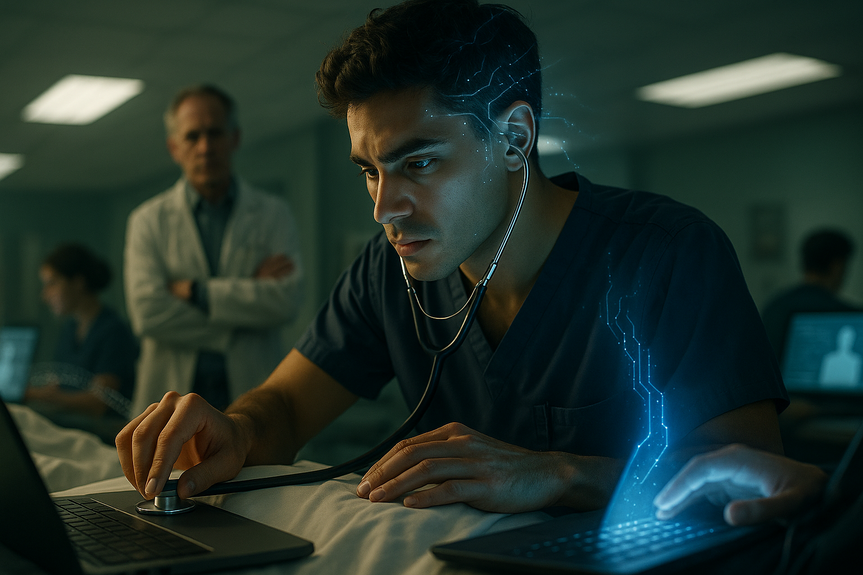

AI risks in medical training threaten clinical judgment, reshape training to preserve vital skills

AI risks in medical training are real: automation bias, deskilling, privacy gaps, and weaker memory. Experts urge schools and hospitals to set rules, teach AI literacy, and protect bedside skills. This guide explains the dangers, shows where AI helps, and lists steps to keep clinical judgment strong.

A recent editorial in a leading medical journal warned that overreliance on generative tools can weaken core clinical skills. The authors urged medical schools to set clear policies, run AI-free assessments, and teach data literacy. They also flagged bias and privacy problems, which can harm patients if left unchecked.

Hospitals are adding AI to imaging, notes, and triage. That can boost speed. But it can also nudge trainees to trust outputs they do not verify. New position papers from regulators echo this concern and call for ethical, legal, and professional guardrails.

AI risks in medical training: key pitfalls

Automation bias and over-trust

When AI suggests a diagnosis, students may accept it without a full differential. Over time, this can narrow their thinking and hide rare but serious causes.

Cognitive offloading and weaker memory

If learners lean on chatbots for facts and plans, recall may slip. Memory supports pattern recognition, which doctors need in emergencies and busy clinics.

Deskilling and outsourced reasoning

Step-by-step reasoning builds judgment. If AI drafts histories, exam plans, or notes, trainees may skip the hard parts where learning happens.

Bias and fairness

Studies show some models downplay symptoms in women and show less empathy toward minority patients. Unchecked use can spread bias into care.

Privacy and data governance

Uploading sensitive notes or images to external tools can breach rules. Even de-identified data can re-identify patients if controls are weak.

Skills that must be protected

Clinical reasoning: build broad differentials, test hypotheses, and update plans

Bedside communication: listen well, share risks, and show empathy

Physical examination: detect subtle signs that models may miss

Teamwork: coordinate with nurses, pharmacists, and specialists

Professional judgment: weigh uncertainty, values, and safety

Where AI can help—if used right

Imaging support: flag likely findings to prioritize reads, with human review

Documentation: draft summaries that clinicians edit for accuracy and tone

Simulation: create practice cases for reasoning drills and feedback

Education: generate quizzes, but require learners to explain answers

Use AI to speed lower-risk tasks. Keep humans in the loop for diagnosis, consent, and high-stakes decisions. This reduces AI risks in medical training while preserving learning.

Mitigation strategies for schools and hospitals

Clear policies and boundaries

Define allowed tools, use cases, and red lines (no patient uploads to public apps)

Require logging of AI use in assignments and clinical drafts

Create an approval pathway for new tools with security checks

Assessment redesign

Run AI-free stations: bedside interviews, physical exams, and team scenarios

Test clinical reasoning with oral exams and written differentials

Add a separate AI competency: prompt design, verification, bias spotting, error reporting

Curriculum upgrades

Teach how LLMs work, where they fail, and why they hallucinate

Drill source checking: ask learners to cite and compare primary guidelines

Practice “structured skepticism”: always seek alternatives and disconfirming evidence

Run bias labs: compare model outputs for diverse patient vignettes and correct them

Data privacy and security

Use enterprise AI with on-prem or compliant cloud, audit logs, and role controls

De-identify data with automated tools and manual checks

Set strict consent rules for any training data reuse

Train staff on safe prompts and redaction

Clinical governance

Form an AI oversight committee across IT, ethics, legal, and education

Adopt procurement standards: performance claims, bias audits, post-deployment monitoring

Report incidents and near-misses; share lessons across departments

Update policies as tools and regulations evolve

Practical playbook for educators

Start each rotation with an AI safety briefing and case-based examples

Pair every AI use with a verification step and a reflective note

Grade reasoning, not just answers; reward broad and justified differentials

Rotate “AI-off days” to keep memory and exam skills fresh

Use team huddles to compare human and AI plans and discuss gaps

Measuring progress

Track diagnostic accuracy and time to correct diagnosis

Score the breadth and justification of differentials in student notes

Run unannounced AI-free quizzes to check retention

Monitor patient satisfaction, especially communication and trust

Log AI-related near-misses and fix root causes

Equity and patient trust

Test models on diverse cases; demand subgroup performance metrics

Disclose AI use to patients when it informs care

Offer human-only pathways for sensitive scenarios

Invite community input on fairness and consent

Human judgment must lead. AI can help with speed and access, but it should never replace careful thinking, touch, and empathy. With smart policies, strong teaching, and tough assessments, schools can lower AI risks in medical training while raising the bar on safe, fair, and human-centered care.

(Source: https://www.irishtimes.com/health/2025/12/03/doctors-reliance-on-ai-tools-could-erode-critical-thinking-experts-warn/)

For more news: Click Here

FAQ

Q: What are the main AI risks in medical training?

A: AI risks in medical training include automation bias, deskilling, cognitive offloading and outsourcing of reasoning that can erode critical thinking and memory. Other major concerns are reinforcement of existing biases and privacy or data governance breaches that could harm patients.

Q: How can overreliance on AI weaken clinical skills?

A: Overreliance can lead trainees to accept suggested diagnoses without forming full differentials, narrowing their clinical reasoning and masking rare but serious causes. This pattern of cognitive offloading and reduced practice undermines memory retention and hands-on skills needed in emergencies.

Q: What institutional policies can reduce harm from AI use in education and practice?

A: Institutions should set clear boundaries defining allowed tools and red lines (for example, no patient uploads to public apps), require logging of AI use, and create approval pathways for new tools with security checks. They should also mandate enterprise-grade deployments, de-identification procedures and strict consent rules to address AI risks in medical training.

Q: How should assessments be redesigned to protect bedside and reasoning skills?

A: Assessments should include AI-free stations such as supervised bedside interviews, physical exams and team scenarios, alongside oral exams and written differentials that test clinical reasoning. Adding a formal AI competency focused on prompt design, verification, bias spotting and error reporting helps ensure responsible use.

Q: In what ways can AI be used safely in medical education without undermining learning?

A: AI can assist with imaging triage, draft documentation for clinician editing, create simulation cases for reasoning drills, and generate quiz items that require learners to explain answers. Used with human verification and reflective steps, these applications speed lower-risk tasks while keeping humans responsible for high-stakes decisions.

Q: What curriculum changes are recommended to teach safe AI use?

A: Curricula should teach how LLMs work, where they fail and why they hallucinate, plus source-checking, structured skepticism and bias labs comparing outputs across diverse patient vignettes. Training should build data literacy and make the evaluation of AI a formal competency so learners can design, test and safely incorporate tools.

Q: How can patient privacy be protected when AI tools are used in training?

A: Protect privacy by using enterprise or compliant cloud solutions with audit logs and role-based controls, and by applying automated and manual de-identification before data reuse. Institutions should set strict consent rules, limit training data use and train staff on safe prompting and redaction to prevent breaches.

Q: How can schools and hospitals measure whether mitigation strategies are working?

A: Measure progress by tracking diagnostic accuracy, time to correct diagnosis, and the breadth and justification of differentials in student notes, plus unannounced AI-free quizzes to assess retention. Also monitor patient satisfaction and log AI-related near-misses with root cause analysis to inform policy updates.