AI News

21 Nov 2025

Read 14 min

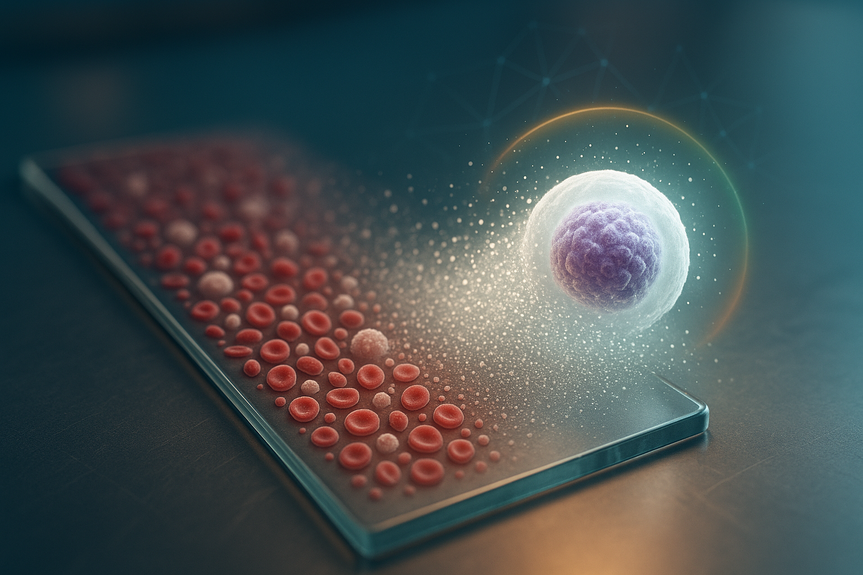

Generative AI blood smear analysis finds abnormalities fast

generative AI blood smear analysis speeds diagnosis by flagging abnormal cells with expert accuracy.

How generative AI blood smear analysis works

Most AI tools classify images into fixed labels. CytoDiffusion flips the script. As a generative model, it learns how blood cells look by modeling the process of turning noise into a realistic image. This training helps the system “understand” the many ways normal and diseased cells can appear, even when they look slightly different from patient to patient.From whole smear to cell-by-cell insight

A single smear can contain thousands of cells. A person cannot inspect every one, but the model can. CytoDiffusion processes an entire slide, scores each cell, and builds a map of potential abnormalities. It highlights what needs a human review and clears routine cases, cutting time spent on manual screening.Why generative beats plain classification for morphology

– It captures variation. Blood cells vary by patient, disease stage, treatment, and staining differences. – It handles subtlety. Minor shape changes can signal disease. Generative models learn these nuanced patterns. – It scales to the whole slide. Instead of sampling a few fields, it evaluates everything, reducing sampling bias. – It supports better uncertainty scoring. The model can signal when its view is unclear, guiding safe hand-offs to experts.Performance that supports clinical use

In published testing, the system reached over 90% sensitivity and about 96% specificity when hunting for abnormal cells. This balance matters. High sensitivity helps catch disease early. High specificity reduces false alarms and extra work for clinicians. The model also stood up to stress tests: it handled images from devices it had not seen before and performed well on new data.When the model is unsure, it says so

One of the standout features is calibrated uncertainty. Humans can be confident and wrong. The system is designed to avoid that. It raises its hand when the risk of error is high, so the case goes to a specialist sooner. This “know what you don’t know” behavior supports safer decisions and stronger trust.Realism through synthetic images

Because the model is generative, it can create synthetic cell images that look real to expert eyes. This has two benefits: – It validates the model’s internal understanding of cell morphology. – It may help expand training data for rare cell types while protecting patient privacy when used with proper controls.Fueling progress with a massive, open dataset

CytoDiffusion was trained on more than half a million smear images from Addenbrooke’s Hospital in Cambridge, one of the largest cell morphology datasets available. The research team plans to share this resource with the community. Open data can speed discoveries, improve benchmarking between labs, and make tools more reliable across health systems.Why size and diversity matter

– More images mean better coverage of normal and rare cell appearances. – Data from varied equipment helps the model generalize to different lab setups. – Public access invites peer review and faster, more transparent improvements.What this means for labs and clinicians

Generative AI blood smear analysis is not a replacement for hematologists. It is a force multiplier. By scanning every cell and sorting cases, it frees time for complex diagnosis and patient care.Day-to-day benefits

– Faster triage: Routine slides can be cleared quickly, so urgent cases move up the queue. – Fewer missed findings: Scanning all cells cuts the risk of overlooking rare morphologies. – Better consistency: Standardized scoring reduces variability between human readers and shifts. – Clear escalation: When the model is unsure, the case routes to the expert with context attached.Clinical scenarios that gain the most

– Anemia workups: Rapid review of shape changes like target cells or schistocytes. – Suspected leukemia: Early flagging of blasts and atypical precursors for urgent confirmation. – Infection signals: Quick spotting of reactive lymphocytes or toxic neutrophil changes. – Therapy monitoring: Tracking shifts in morphology over time to support treatment decisions.Implementation guide for hospital labs

Moving from a promising paper to safe practice needs planning. Here is a practical path many labs can follow.Build a robust pilot

– Run the model in “silent mode” first, compare results with human readers, and study disagreements. – Use diverse instruments and staining protocols to confirm stable performance. – Define clear thresholds for escalation and documentation.Integrate with your systems

– Connect the model to your slide scanner and laboratory information system. – Configure structured outputs, including per-cell scores and a slide-level summary. – Keep an audit trail. Every AI decision should be traceable.Train the team

– Teach staff how to read AI summaries, uncertainty scores, and heatmaps. – Create simple rules for when to trust, verify, or ignore model suggestions. – Encourage feedback. Edge cases and misreads should feed continuous improvement.Monitor and maintain

– Track sensitivity, specificity, time saved, and the rate of escalations. – Recalibrate after hardware or staining changes. – Commit to periodic revalidation and version control.Safety, fairness, and regulation

Patient safety comes first. Generative models add new checks to a lab’s quality system.Key safeguards

– Bias review: Ensure the dataset represents your patient population. Monitor drift over time. – Privacy: Use de-identified images and follow strict data governance for training and audits. – Robustness: Validate the model with your scanners, stains, and workflows before clinical use. – Cybersecurity: Protect model servers and integrations from tampering. – Regulatory path: Align with local rules for clinical AI, including documentation and performance claims.Beyond detection: what comes next

Generative models can do more than sort normal from abnormal. Their ability to model full morphology opens new doors.Promising expansions

– Automated morphology scoring: Standardize grades for dysplasia, anisocytosis, or poikilocytosis. – Longitudinal tracking: Map morphology changes across treatment cycles and link to outcomes. – Rare cell discovery: Surface unusual patterns for expert review and research. – Active learning loops: Use difficult cases and disagreements to keep improving the model.Limitations and open questions

As with any tool, there are boundaries and risks to manage.Points to watch

– Generalization: Even with strong results, performance can drop with new staining methods or scanner optics. Continuous validation is vital. – Edge cases: Extremely rare morphologies may need more training examples or manual oversight. – Synthetic data pitfalls: Synthetic images can aid training, but must not drift from clinical reality. – Throughput and cost: Whole-slide analysis can demand compute resources; labs need to plan capacity and cost control. – Human factors: AI that is “almost always right” can cause over-trust. Training and clear escalation rules reduce that risk.How generative AI could reshape hematology

Generative AI blood smear analysis is part of a wider shift: machines handling volume and pattern finding while humans make the final call. If adopted well, labs can shorten time-to-answer, raise diagnostic confidence, and extend specialist expertise to smaller hospitals. That means quicker starts on treatment and fewer repeat tests.Measuring value

– Turnaround time: Faster initial reads and priority routing. – Diagnostic yield: Higher detection of subtle abnormalities. – Consistency: Less inter-reader variability across shifts and sites. – Staff satisfaction: Less fatigue from repetitive scanning, more focus on complex cases. – System learning: Each reviewed case improves both human and machine knowledge.Practical tips for a smooth rollout

– Start with a narrow use case, such as abnormal cell flagging for adult smears. – Define performance goals (for example, minimum sensitivity and specificity) and review monthly. – Keep humans in the loop for all non-routine results. – Share lessons with partner labs to build common standards and trust. – Plan for scale: storage, compute, and network bandwidth for whole-slide images. The bottom line: this Cambridge-led work suggests a safer, faster path for cell morphology at scale. With careful validation and a human-in-the-loop design, generative AI blood smear analysis can boost accuracy, reduce delays, and bring consistent quality to every slide. The promise is not to replace expertise, but to amplify it where it counts. In short, labs that move early—and move carefully—will set a new standard for hematology. Patients benefit from earlier detection, clearer decisions, and fewer missed abnormalities. With the right guardrails in place, generative AI blood smear analysis can become a daily asset in modern diagnostics. (Source: https://www.insideprecisionmedicine.com/topics/informatics/generative-ai-spots-abnormal-blood-cells-better-than-experts/) For more news: Click HereFAQ

Contents