AI News

27 Jan 2026

Read 15 min

How GPT-5.2 Pro FrontierMath results reveal math wins

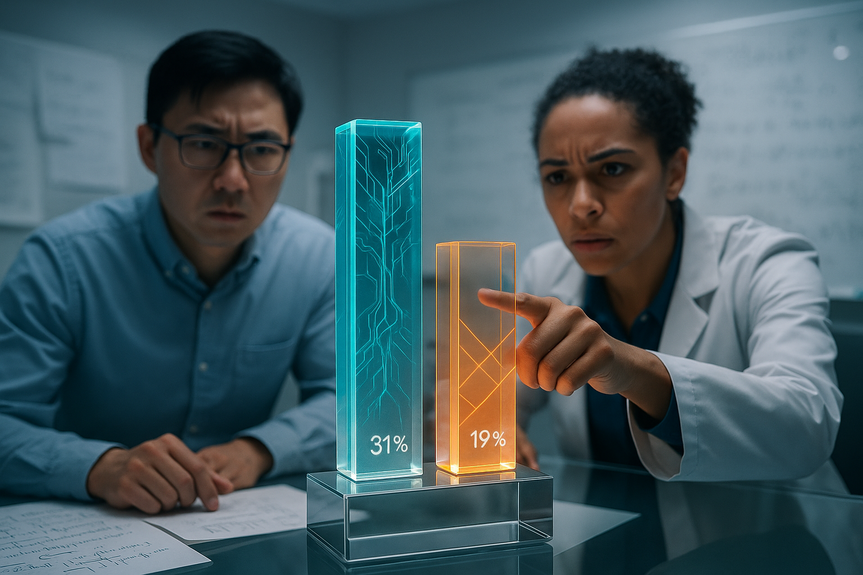

GPT-5.2 Pro FrontierMath results show a 31% Tier 4 breakthrough, enabling faster math discovery now.

What the GPT-5.2 Pro FrontierMath results actually show

Tier 4 performance: why it matters

FrontierMath is known as a hard benchmark. It aims to test reasoning across multiple steps, not just short answers. Tier 4 is the top level and is considered the hardest part of the suite. Hitting 31 percent there is not a small edge; it is a breakout result. These tasks push a model to plan, prove, and check. They are not simple arithmetic. The fact that GPT-5.2 Pro solved four previously unsolved tasks hints at new capabilities, or at least better search and verification strategies inside the model. This is why the headline number matters. It suggests a qualitative shift, not just marginal gains.Scores in context

The most meaningful comparisons in Epoch AI’s report are simple:How the tests were run and why it matters

Epoch AI ran the tests through the ChatGPT website. They chose this route due to API issues at the time. Manual testing is not ideal for strict reproducibility, but it can still be useful, especially when prompt formatting and long outputs matter. It also reflects how many people actually use these models—interactively, not just through code. The upside of manual runs is that evaluators can nudge the model to show steps or rethink a path when it stalls. The downside is that small changes in wording can move results. Because of that, replication by other labs will be important. Even so, the difference in scores here is large enough that independent teams will likely see a similar ordering, even if their exact numbers differ.Did the model do new math—or just better benchmark math?

Four first-time solves

According to Epoch AI, GPT-5.2 Pro solved four benchmark tasks that no other model had solved before. That matters, but we should parse it carefully. “First-time” in a benchmark context means first-time on those items as defined and scored. It does not mean the model created a new theorem or proof that changes the field. Still, it shows better coverage of hard cases, which is exactly what Tier 4 is designed to test.Reviewer feedback from mathematicians

Mathematicians who looked at the model’s work saw real value. They said many solutions were useful and mostly correct. At the same time, they flagged explanations that lacked precision. This is a familiar pattern. Models can reach the right idea, but they may skip a justification or use loose language. That gap is where human oversight remains key.From benchmark gains to real research

Recent reports say GPT-5 variants helped with real math work. Some posts claim the system solved Erdős problems on its own, and helped researchers with others. These claims come with cautions from the community. Renowned mathematician Terence Tao, for example, warns against quick conclusions. He suggests that the apparent difficulty of a problem can shrink when you apply enough speed and search, which modern models can do. The truth likely sits in the middle. Models can be very useful in exploration. They can try many approaches fast, spot patterns, and produce drafts. But they still need a strong human in the loop to check logic and fill gaps. The GPT-5.2 Pro FrontierMath results support this view. The system is getting better at the “try many paths and refine” loop. It still struggles with complete rigor at every step.How this changes day-to-day math work

For students

If you are a student, this result means you can lean on the tool for harder problem sets, but with care. The model can outline approaches and show common strategies. You should still do the checking. Ask the model to justify each step, not just give a final answer. When possible, verify with a second method.For teachers

Teachers can use the model to generate varied practice problems and worked solutions. They can also use it to show common errors and why they fail. The reported lack of precision in some explanations is a feature here. It creates teachable moments. Students can learn to critique, not copy.For research teams

Teams exploring proofs or conjectures can use the model for search. Let it suggest lemmas, outline structures, or test special cases. Then apply human review. Keep a log of prompts and outputs so you can reproduce useful lines of thought. This practice makes collaboration and later verification easier.Good uses and pitfalls to watch

Strong use cases

Pitfalls

Simple workflow tips

Where this sits among other math benchmarks

FrontierMath is known as a tough yardstick. It differs from lighter problem sets that focus on short answers. The Tier 4 slice is especially demanding. A 31 percent score there indicates the system can handle a fair share of long, multi-step problems under test conditions. No single benchmark tells the whole story. Scores can depend on prompt style, temperature, and evaluation rules. But when one model opens a double-digit lead over strong peers, it is a sign that the training recipe and inference process improved in a meaningful way. In that sense, this result is more than a number. It is a signal of a working approach that other labs will try to match.Why the model might be improving now

We can only speculate about the causes, but a few factors are likely:What to watch next

Replication will matter. Other teams should run FrontierMath with careful, fixed prompts and public logs. We also need cross-benchmark checks. If gains appear on several hard math tests, not just one, the case grows stronger. Another key step is blind review by independent mathematicians, who can grade proofs without knowing which model wrote them. On the product side, we should watch for stable API access to the exact model Epoch AI tested. That will allow larger-scale, automated evaluations with less variance. We should also watch for improved “Thinking” variants. The community already reports that GPT-5-Thinking and GPT-5-Pro can be useful for real problems. If those lines keep improving, research workflows will change fast.What this means for the AI race

Benchmark gains tend to come in waves. One lab opens a lead. Others respond with new training tricks, more compute, or better data. The reported 31 percent score gives OpenAI a strong talking point. It will push rivals to focus on math reasoning and proof reliability. That competition should help users. Better math ability often spills into other domains, like code, science, and data analysis. The same skills—planning, checking, and revising—drive quality in those areas too. If this result holds up, we can expect a general lift in tools that handle long, careful reasoning.Limits we should keep in mind

Benchmarks do not capture all real-world messiness. In practice, problem statements can be vague, data can be noisy, and goals can shift. Models that do well on fixed tasks may still stumble when context changes. Also, as the mathematician feedback shows, even good solutions can carry small gaps. Those gaps matter when you publish or ship. For now, the best approach is mix-and-match. Use the model for speed and breadth. Use humans for depth and final judgment. This hybrid approach is not a crutch; it is the strongest way to turn raw model power into reliable outcomes.Bottom line on GPT-5.2 Pro FrontierMath results

The GPT-5.2 Pro FrontierMath results point to real progress in hard math reasoning. A 31 percent Tier 4 score, four first-time solves, and positive expert reviews make a clear case. The work is not perfect. Some explanations need sharper detail, and replication is important. But the direction is strong. For students, teachers, and researchers, this means faster exploration and better draft solutions—when paired with careful human checks. In short, the GPT-5.2 Pro FrontierMath results are a milestone worth noting, and a sign that math-capable AI is moving from promise to practice. (Source: https://the-decoder.com/openais-gpt-5-2-pro-solves-math-problems-that-stumped-every-ai-model-before-it/) For more news: Click HereFAQ

Contents