AI News

10 May 2026

Read 11 min

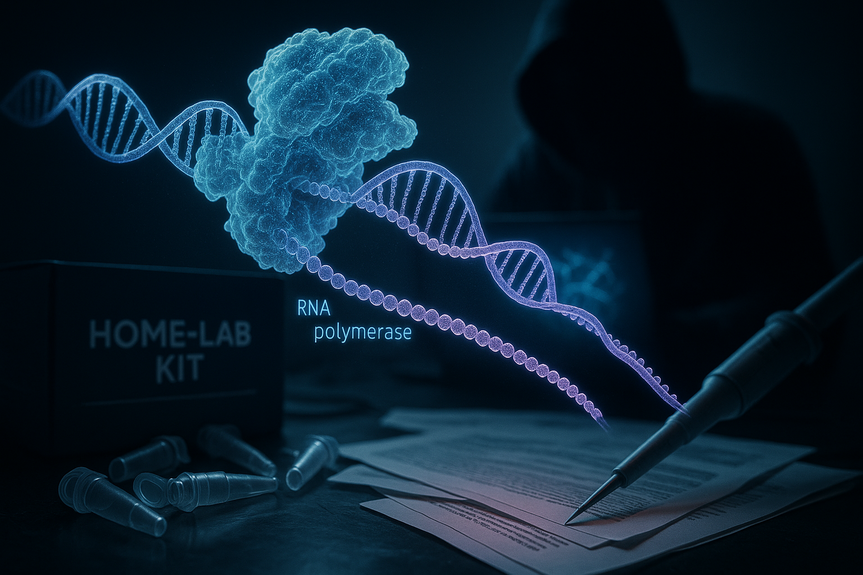

AI-enabled bioterrorism risk: How to detect and prevent

AI-enabled bioterrorism risk requires real-time detection and practical safeguards to stop misuse.

Understanding AI-enabled bioterrorism risk

What is changing

– AI makes it easier to find and connect information. – Some models can suggest steps, tools, and timelines. – Biological parts and services are cheaper and ship fast. – Online communities can unknowingly share risky tips. These shifts do not mean disaster is certain. They do mean the margin for error is thinner. Clear rules and strong guardrails can keep helpful research open while stopping abuse.How misuse could happen

– Search and planning: An attacker may use AI to plan tasks, spots to buy supplies, or ways to avoid checks. – Misleading content: AI can create convincing lies to hide illness, confuse the public, or erode trust. – Skill bridge: AI can turn scattered facts into stepwise guidance for a novice. Guardrails must block this. Managing AI-enabled bioterrorism risk requires both technical and social solutions. No single control is enough, but together they work.Early detection: layers that catch problems fast

Safer AI models and platforms

– Capability testing before release: Independently test models for risky outputs. Block or down-scope if needed. – Guardrails and monitoring: Use safety filters, restricted tools, and human review for sensitive topics. – Identity and rate limits: Verify high-risk users and cap usage to prevent automated abuse. – Incident response: Provide a clear channel to report harmful outputs and fix them fast.DNA and lab supply screening

– Sequence screening: Vendors should scan orders for known hazards and suspicious patterns. – Customer checks: Verify buyers for sensitive items. Flag unusual orders and repeat attempts. – Data sharing: Report blocked orders to trusted partners under privacy and legal rules. – Standards: Align on common screening rules so attackers cannot shop around weak links.Biosurveillance that sees early signals

– Multiple data sources: Combine wastewater, clinic visits, over-the-counter drug sales, and school absences. – Faster analytics: Use AI to spot odd clusters and send alerts to public health teams. – Field validation: Back alerts with lab testing and local checks to cut false alarms. – Secure data pipes: Protect patient privacy and stop tampering or spoofing of signals.Prevention and resilience by design

Governance for powerful AI

– Risk-based access: Offer the most capable models via controlled channels with audits. – Compute checks: Monitor very large training runs. Ask for disclosures and safety plans. – Red teaming: Bring in domain experts to probe for bio-harm outputs before and after release. – Transparency: Publish high-level safety reports so the public and researchers can hold providers to account.Secure labs and digital systems

– Access control: Use badges, logs, and cameras for sensitive rooms and equipment. – Inventory tracking: Track biological materials and critical gear from order to disposal. – Cyberbiosecurity: Segment lab networks, lock down lab devices, and train staff on phishing and data theft. – Vendor hygiene: Approve trusted suppliers and watch for spoofed storefronts or fake parts.Public health readiness

– Fast diagnostics: Pre-arranged tests and platforms to detect new threats in days, not months. – Medical countermeasures: Flexible vaccine and drug platforms, with clear plans to scale production. – Surge capacity: Contracts for extra hospital beds, staff, and oxygen if a spike hits. – Exercises: Regular drills that include AI misuse scenarios and cross-border coordination.Communication that builds trust

Clear messages that beat rumors

– Single source of truth: Health agencies post updates on a set schedule with plain language. – Rapid debunking: Pre-made templates and trained teams counter false claims within hours. – Partner networks: Work with schools, local leaders, and platforms to spread accurate guidance. – Open data: Share what is known, what is not, and what comes next to reduce fear.Training people to spot and stop misuse

Skills for teams on the front line

– AI platform staff: Recognize and block risky prompts and patterns. – Lab workers: Report odd orders, unusual requests, or safety rule-breaking. – Health professionals: Flag strange case clusters and use secure reporting tools. – Law enforcement and regulators: Understand digital trails tied to bio-related crimes. Organizations can map AI-enabled bioterrorism risk to their own threat models, then set controls at the right depth. Start with the highest-impact, lowest-cost steps: strong model guardrails, vendor screening, and clear incident playbooks. Expand to richer biosurveillance and regular joint exercises as capacity grows.Metrics that show progress

Track what matters

– Time to detect: From first signal to confirmed alert. – Time to respond: From alert to action (e.g., blocks, advisories). – Coverage: Share of DNA vendors and platforms using screening and guardrails. – False positives and negatives: Tune systems to be both sensitive and precise. – Exercise scores: Independent reviews of drills and after-action fixes.Global coordination

Work across borders

– Common rules: Align on screening standards and AI safety baselines. – Information sharing: Fast, secure channels for threat intel and incident reports. – Capacity building: Fund labs and public health in lower-resourced regions. – Accountability: Sanctions for firms or actors who enable clear abuse. Strong defenses can live side by side with open science and innovation. The path forward is layered, measured, and fast. With tested guardrails, smart monitoring, and ready health systems, we can reduce AI-enabled bioterrorism risk while keeping the benefits of AI and modern biology.(Source: https://www.economist.com/science-and-technology/2026/05/05/how-ai-tools-could-enable-bioterrorism)

For more news: Click Here

FAQ

Contents