Claude outage impact on developers forces devs to relearn manual coding to stay productive offline

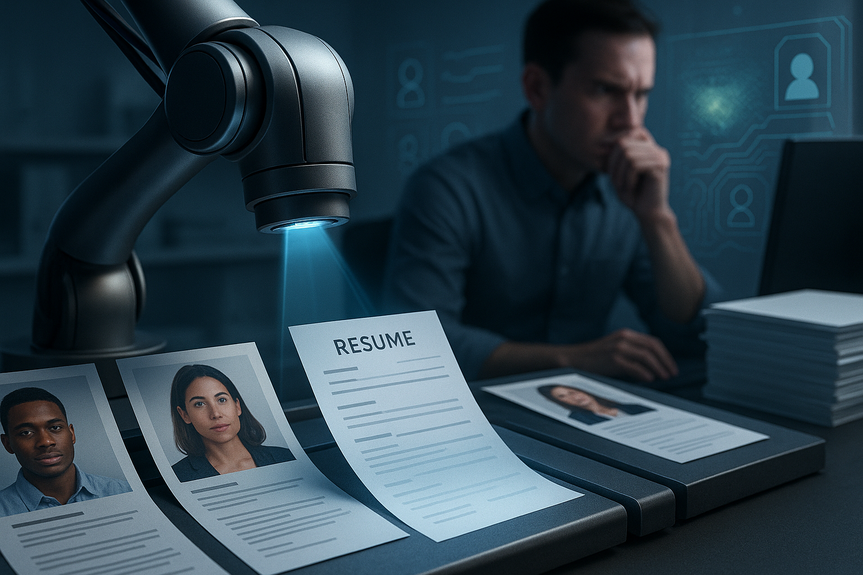

Claude outage impact on developers was a wake-up call. When Anthropic’s AI tools went down, many engineers paused coding or switched tasks. The blackout showed how much day-to-day work now leans on AI for writing, debugging, and reviews—and why every team needs offline workflows, backups, and habits to keep shipping code.

In just a few years, AI coding assistants moved from novelty to default. Claude Code rose fast with strong reviews from working engineers, and usage spiked even more after public support for Anthropic grew. When service errors hit this week, developers across Reddit, Discord, and X admitted they felt stuck. Some pivoted to meetings and docs. Others joked they would “code like a caveman.” The lesson is simple: you can love AI and still plan for downtime.

Claude outage impact on developers: What the blackout revealed

Speed made dependency

AI now drafts functions, explains stack traces, and proposes tests in seconds. That speed trained teams to treat AI as a single button. When that button failed, the Claude outage impact on developers was immediate: context switching, missed estimates, and slower reviews.

Growth strained the pipes

Anthropic said rapid user growth stressed its systems. Claude topped app charts after a Pentagon dispute pushed many users to try or switch. Big shops like Meta, Netflix, and others also rely on AI coding tools from multiple vendors. Popularity creates pressure. Pressure reveals weak links.

Skills atrophy is real

Leaders already worry about over-reliance. If you rarely write boilerplate, remember syntax, or read raw compiler output, you get rusty. Downtime exposes that. The fix is not to stop using AI. It is to keep your base skills fresh and your toolchain resilient.

How to code offline when AI is down

Before an outage: Prepare your bench

Install offline docs: Zeal or DevDocs offline packs, man pages, language docs (JDK, Rust, Go, Python).

Keep local code search: ripgrep, grep, and a fast IDE index. Add ctags for jump-to-definition.

Automate linting and types: ESLint, Prettier, mypy, ruff, flake8, tsserver. Make “npm run check” or “make check” a habit.

Bundle tests: fast unit tests, a few high-value integration tests, and seed data in containers.

Cache dependencies: set up a local proxy or mirror for npm, PyPI, Maven, Cargo. Pre-pull key Docker images.

Save snippet libraries: common templates for APIs, DB access, logging, retries, and CLI parsing.

Keep a “no-AI” playbook: steps for triage, debugging, test-first flow, and code review checklists.

During an outage: Work like an engineer, not a passenger

Break work small: write tiny functions, commit often, and keep PRs under 200–300 lines.

Write tests first: let failing tests guide the next line of code. TDD is your guardrail.

Read the error: parse stack traces, search the codebase, and reproduce in a REPL.

Use the shell: ripgrep for references, git bisect for regressions, and curl/Postman for smoke checks.

Debug out loud: rubber-duck complex logic, or pair for 15 minutes to unstick.

Refactor in steps: extract function, name things clearly, and delete dead code.

Document as you go: short comments and a concise CHANGELOG entry reduce rework later.

After service returns: Close the loop

Compare ideas: ask AI to review your working code. Keep what improves clarity or safety.

Add tests for gaps: lock in new behavior so future changes stay safe.

Capture prompts: note what you would have asked the model. Save them to your team snippets.

Run a retro: list what slowed you down and what kept you shipping. Update the no-AI playbook.

Team safeguards to reduce future pain

Design for failure

Adopt multi-vendor AI: enable at least two assistants in your editor or chat stack.

Set fallbacks: if the primary model times out, route to a backup or a smaller local model for summaries and diffs.

Create SLAs and alerts: monitor latency, error rates, and token limits like any other dependency.

Keep skills alive

Run “no-AI Fridays” or short drills where teams ship with tests, docs, and tools only.

Do code-reading clubs: walk through core modules, not just PRs.

Reward fundamentals: clear designs, small PRs, test coverage, and incident write-ups.

Measure what matters

Track lead time, PR size, review time, change failure rate, and rollback rate.

Watch AI usage vs. defects: if AI saves time but bug rates rise, tighten reviews and test bars.

Choosing resilient AI workflows

Balance convenience and control

IDE integration is great, but keep the CLI flow equal: commands for format, test, build, and release.

Prefer “AI as reviewer” over “AI as author” on critical code paths. Use it to propose diffs, not own them.

Cache useful AI outputs locally: saved refactors, migration plans, and repeatable prompts.

Mind vendor and policy risk

Public contracts and policy fights can shift usage overnight. The recent Pentagon dispute and user protests changed traffic patterns fast. Treat AI providers like any critical vendor: review terms, export options, data controls, and business continuity plans.

A practical offline workflow you can adopt today

One-hour setup

Install offline docs for your main language and framework.

Add ripgrep, ctags, and a makefile with check, test, and build targets.

Enable strict linters and type checks in CI and pre-commit hooks.

Mirror key dependencies, pre-pull Docker images, and pin versions.

Create a 10-step “no-AI” checklist and pin it in your repo.

Daily habits

Write small, testable units first.

Commit early, commit often, push green builds.

Keep a personal snippet file and update it weekly.

End each day with five minutes of cleanups you do not ask AI to do.

In the end, the Claude outage impact on developers should push teams to build resilience, not to abandon AI. Keep the speed. Add safeguards. Practice the basics. If the lights go out again, you will still ship.

(Source:

https://www.businessinsider.com/claude-outages-anthropic-ai-software-engineers-developers-coding-dependance-2026-3)

For more news:

Click Here

FAQ

Q: What happened during the Claude outages this week?

A: Anthropic’s Claude.ai and Claude Code experienced “elevated errors” starting on Monday with users reporting access issues into Tuesday, and an Anthropic spokesperson said the problems were resolved by Wednesday. The Claude outage impact on developers included many engineers pausing coding or switching to non‑coding tasks while the tools were unavailable.

Q: Who was affected by the outage and which companies use Claude Code?

A: Dozens of individual users posted about disruptions on Reddit, Discord, and X, and engineers at companies including Meta, Netflix, Salesforce, and Accenture use Claude Code. The outage affected people who rely on Claude for writing, debugging, and code reviews in their day‑to‑day work.

Q: Why did Anthropic say the outages occurred?

A: Boris Cherny, Anthropic’s head of Claude Code, said the recent outages were caused by rapid user growth straining their services. The company’s status page also reported “elevated errors” as users experienced access problems.

Q: How did the outages reveal developers’ dependency on AI?

A: The blackout showed teams treating AI as a “single button” for drafting functions, explaining stack traces, and proposing tests, which led many engineers to context switch, miss estimates, or postpone coding when the tool failed. The Claude outage impact on developers highlighted concerns about skill atrophy and the need to keep core coding habits and offline workflows sharp.

Q: What offline preparations can individual developers make to stay productive during an AI outage?

A: Install offline documentation (DevDocs or man pages), keep fast local code search and IDE indexes like ripgrep and ctags, enable automated linters and type checks, and bundle fast unit tests and seed data. Also cache key dependencies and Docker images, save common snippet libraries, and maintain a short “no‑AI” playbook for triage and debugging steps.

Q: What practical steps should a developer take during an outage to keep shipping code?

A: Break work into small functions, write tests first to let failing tests guide development (TDD), and carefully read stack traces while reproducing failures in a REPL. Use shell tools like ripgrep, git bisect, and curl for triage, pair or rubber‑duck to unblock logic, refactor in small steps, and document changes as you go.

Q: After Claude or another AI service returns, how should teams close the loop?

A: Compare hand‑written solutions with AI suggestions and keep any changes that improve clarity or safety, then add tests to lock in the behavior you relied on during the outage. Capture useful prompts for team snippets, run a retrospective on what slowed you down, and update the no‑AI playbook and practices accordingly.

Q: What organizational safeguards can reduce future disruption from AI outages?

A: Adopt multi‑vendor AI setups, set fallbacks to secondary assistants or smaller local models, and treat AI providers like critical vendors with SLAs, monitoring, and alerts. Keep skills alive with “no‑AI” drills and code‑reading clubs, and track metrics such as lead time, PR size, review time, change failure rate, and rollback rate to measure the Claude outage impact on developers.