how AI affects critical thinking and practical steps to protect your problem-solving skills today.

Studies are starting to show how AI affects critical thinking. Brain scans and workplace surveys suggest AI can lower mental effort, help tasks go faster, and weaken recall if overused. You can protect your skills by using AI as a tutor, verifying results, and practicing step-by-step reasoning.

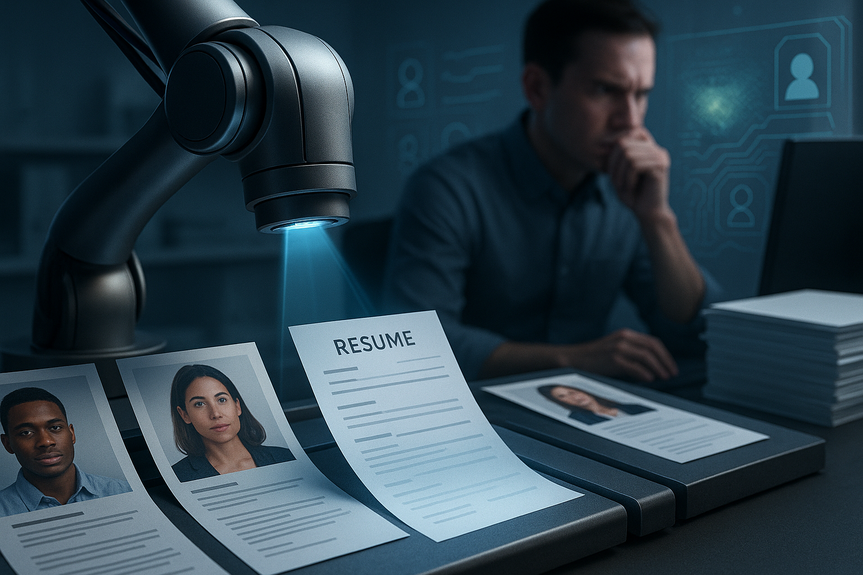

AI tools now sit in study halls, offices, and homes. They write drafts, check grammar, and mine data. This is helpful. It also changes how our brains work while we learn and solve problems. The latest research shows both gains and risks. The key is to stay active, not passive, when you use AI.

How AI affects critical thinking

What recent studies say

MIT researchers tracked brain activity while students wrote with and without a chatbot. They used EEG caps to measure effort as people drafted essays. Students who leaned on the chatbot showed lower activity in areas tied to cognitive processing during the task. They also struggled more to quote from their own essays afterward. This points to weaker engagement and recall.

A separate study from Carnegie Mellon University and Microsoft reviewed 900 AI-assisted tasks from 319 professionals. When people felt very confident in what the tool could do, they used less critical thinking. AI saved time, but users checked less and challenged less.

In UK schools, an Oxford University Press survey found a split view. Six in ten students felt AI hurt their skills. Yet nine in ten also said AI helped them with at least one area, like problem-solving or revision. Many asked for guidance on good use. The picture is mixed, and habits matter.

What brain and behavior studies suggest

Lower mental load can free you to focus on ideas. It can also dull the struggle that builds skill. These early results do not prove how AI affects critical thinking in every case. But they warn us about “auto-pilot” use. When AI drafts, explains, and edits without your active pushback, your recall and judgment can fade.

Harvard Medical School studied clinicians using AI to read images. Some doctors improved with AI help. Others got worse. The reasons were not clear. The lesson: pairing people with AI needs care, testing, and training. Blind trust is risky.

The risk of skill atrophy in school and work

Better outputs, weaker learning

If a chatbot outlines your essay, your final grade may rise. But your own analysis may fall if you skip the hard thinking. Over time, this can erode the very skills school is meant to build.

Overreliance creates hidden costs

When workers stop checking assumptions, errors slip in. Teams may ship neat reports that rest on shaky logic. Confidence in the tool can mask shallow review. This harms quality and growth.

Use AI as a tutor, not a crutch

AI can guide you. It should not replace your effort. Treat it like a study partner that asks questions, gives hints, and checks your steps.

Prompts that keep you thinking

Ask for a Socratic dialog: “Ask me 5 questions to test my understanding, one at a time. Do not give the answer until I try.”

Request scaffolding: “Break this problem into steps. I will try each step before you show a sample answer.”

Demand reasoning: “Explain the why behind each step. Highlight common mistakes I should watch for.”

Compare paths: “Give two different methods to solve this. When should I pick each?”

Practice recall: “Hide your solution. Quiz me with spaced questions. Only reveal hints if I get stuck.”

Prompts that protect accuracy

Source checks: “List sources with links. State what each source adds. Mark any weak or uncertain claims.”

Adversarial check: “Critique the draft. Find three flaws or missing counterpoints. Suggest fixes.”

Boundary setting: “If you are unsure, say so. Ask me for more info rather than guessing.”

Practical rules for safe, smart AI use

Start with your own plan. Write a quick outline or hypothesis before you ask the model for help.

Time-box the assist. Draft first for 20 minutes, then use AI to review or extend, not to replace your effort.

Verify claims. Click sources. Cross-check facts with trusted references.

Explain it back. Summarize the idea in your own words without AI. If you can’t, you don’t know it yet.

Keep a learning log. Note where AI helped and where it hurt. Adjust your prompts and timing.

Guardrails for classrooms and offices

Be transparent. Require students and staff to disclose when and how they used AI.

Grade process, not just product. Credit outlines, drafts, notes, and reflections.

Teach prompt literacy. Show productive prompts that keep humans in the loop.

Set red lines. Ban AI for tasks that must show original thought or assessment.

Protect data. Explain what gets sent to tools and how it is stored.

Review outcomes. Sample work for accuracy, bias, and overreliance trends.

Schools and companies should set clear rules that shape how AI affects critical thinking on daily tasks. Policies should make space for practice without replacing it.

Measure learning, not just outputs

Signals of overreliance

Fewer handwritten notes or outlines before AI prompts

Weaker recall of key points without the draft in view

Quick, polished text with shallow logic or missing evidence

High confidence but low verification habits

Signals of growth

Clear, step-by-step reasoning with evidence

Ability to restate ideas simply from memory

Willingness to revise after challenges and counterexamples

Consistent source checks and error finding

Simple tracking

Self-rate each task: percent human effort vs. AI assist

Record the first attempt score before AI review

List what you learned that you could not do last week

We still have more to learn about how AI affects critical thinking. Early studies show both speed gains and real risks to effort and memory. The safest path is active use: plan first, ask strong questions, verify claims, and explain the result in your own words. Choose habits that let AI help while you keep your mind in charge of how AI affects critical thinking.

(Source: https://www.bbc.com/news/articles/cd6xz12j6pzo)

For more news: Click Here

FAQ

Q: What do recent studies show about brain activity when people use AI to write?

A: MIT research using EEG found that people who used ChatGPT while drafting essays showed lower activity in brain networks associated with cognitive processing and had more trouble quoting from their own essays afterward. This illustrates one way how AI affects critical thinking by reducing active engagement during tasks.

Q: Can AI both help and harm learning at the same time?

A: Yes, an Oxford University Press survey found nine in ten students reported at least one skill benefit from AI while six in ten felt it had negatively impacted their schoolwork skills. Experts warn outputs can improve even as understanding and recall decline, so benefits and harms can coexist depending on use.

Q: What workplace evidence suggests AI can change problem-solving effort?

A: A Carnegie Mellon and Microsoft review of 900 AI-assisted tasks from 319 professionals found higher confidence in tools was linked to less critical thinking effort, meaning workers saved time but checked and challenged outputs less. That pattern highlights a workplace pathway for how AI affects critical thinking when reliance replaces scrutiny.

Q: How can I use AI without letting it erode my problem-solving skills?

A: Use AI as a tutor by starting with your own plan, asking for step-by-step scaffolding, explaining results back in your own words, and verifying claims with trusted sources. These practices reflect recommended ways to protect skills and directly address how AI affects critical thinking by keeping you actively engaged.

Q: What prompts help keep me mentally active when using AI?

A: Prompts that keep you thinking include asking for a Socratic dialog that quizzes you one question at a time, requesting scaffolding that breaks a problem into steps, and demanding explanations of the why behind each step before revealing answers. Using these prompts forces you to attempt solutions first and preserves active learning.

Q: What signs indicate someone is becoming overreliant on AI?

A: Signals of overreliance include fewer handwritten notes or outlines before prompting AI, weaker recall of key points without the draft visible, polished text with shallow logic, and high confidence combined with low verification habits. These are practical indicators to watch to prevent skill atrophy linked to how AI affects critical thinking.

Q: What policies should schools and companies adopt to protect learning quality?

A: Recommended guardrails include requiring transparency about AI use, grading the process as well as the product, teaching prompt literacy, setting red lines for tasks requiring original thought, protecting data, and reviewing outcomes for overreliance trends. Together these policies aim to shape how AI affects critical thinking on daily tasks by maintaining practice and verification.

Q: How should teams verify AI-generated content to avoid errors and bias?

A: Teams should ask the model for source checks, run adversarial critiques to find flaws, time-box AI assistance, cross-check facts with trusted references, and require the model to flag uncertainty rather than guess. These steps match the article’s practical rules and prompts for protecting accuracy and reducing hidden costs from overreliance.