identity-linked AI governance for enterprises prevents data leaks with centralized logging and audit.

Identity-linked AI governance for enterprises ties every AI request to a verified user or service account, applies consistent policies, and records a full audit trail. It reduces data leaks, speeds approvals, and meets compliance needs without slowing teams. Start by mapping identity to AI usage, centralizing controls, and monitoring outcomes across hosted and self-hosted models.

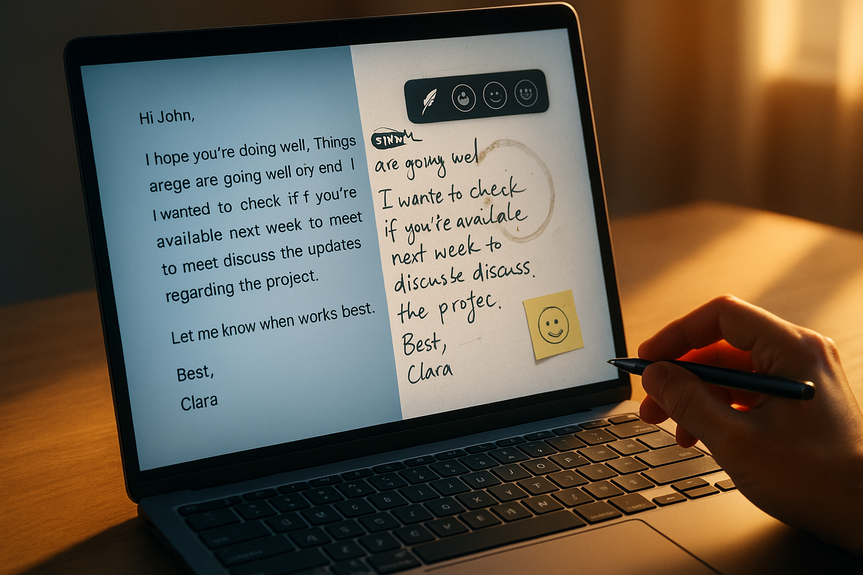

Employees are past the pilot phase with AI. They are past guardrails, too, often without knowing it. Studies show that about a third of data shared with AI is sensitive, and nearly half of workers upload company data into public tools. Enterprises need a simple, strong way to link people, policies, and models. Identity-linked AI governance for enterprises answers this need with clear attribution, enforceable rules, and reliable logs.

Why identity-linked AI governance for enterprises matters

The risk shifted from apps to identities

AI agents and tools route data to third-party models and internal endpoints. Without identity, security teams cannot tell who sent what, where it went, or why. This creates blind spots and slows adoption. Tying AI usage to identity restores trust and control.

Compliance needs an audit trail

Privacy, safety, and export standards now apply to prompts, files, and outputs. If you cannot trace a request to a person or role, audits fail. Identity-linked governance produces the logs and context auditors expect.

Core building blocks

Map every AI call to a real identity

Use your identity provider (SSO) for users and SCIM for group sync.

Issue per-user and per-service API credentials; avoid shared keys.

Bind agents and automations to service accounts with clear owners.

Attach role and department metadata to each AI request.

Centralize policy and authorization

Adopt RBAC/ABAC policies with a policy decision point (PDP) and a policy enforcement point (PEP).

Use established engines (for example, Oso or Cerbos) to express and version rules.

Define rules by role, model, data class, location, and time.

Log, monitor, and audit

Capture prompts, attachments, model, parameters, requester identity, and outcomes.

Redact secrets and personal data in logs while keeping enough context to investigate.

Route logs to your SIEM or observability stack (for example, via Cribl) for alerting and retention.

Classify and protect data

Auto-tag content as public, internal, confidential, or regulated.

Apply DLP rules: block uploads of PII, source code, or client data unless approved.

Filter prompts and outputs for sensitive terms; allow human review for borderline cases.

Support hosted and self-hosted endpoints

Standardize routing through a governance gateway for OpenAI, Anthropic, Google Gemini, OpenRouter, and Vercel.

Apply the same identity, policy, and logging to self-hosted models.

Cover popular coding agents and CLIs like Claude Code, Codex, and Gemini CLI.

A practical rollout plan

Phase 1: Discover and contain

Inventory AI tools, agents, and endpoints in use (including shadow tools).

Create a model allowlist and a default-deny policy for unknown endpoints.

Route all AI traffic through a control plane to attach identity and logs.

Phase 2: Pilot and refine

Onboard a developer team first; measure productivity and policy friction.

Tune rules for token budgets, data classes, and model access by role.

Build dashboards for usage, costs, and incidents.

Phase 3: Scale safely

Extend to customer support, marketing, and finance with role-based policies.

Run training that shows do/don’t examples inside everyday tools.

Review policies monthly; adjust based on risk, cost, and feedback.

Policy examples you can adopt today

Only approved roles can send confidential data to external hosted models; regulated data stays on self-hosted models.

All prompts and outputs must be linked to a user or service account; shared keys are blocked.

Code repositories: read-only to non-engineering roles; write suggestions require human review.

PII masking: redact phone, email, SSN from prompts before model submission.

Geofencing: traffic for EU data stays within EU-hosted models and storage.

Cost guardrails: per-user and per-team token budgets with alerts and soft stops.

Metrics that prove it works

Percent of AI requests with verified identity (target: 100%).

Data exfiltration attempts blocked vs. allowed (trend down over time).

Mean time to investigate an AI incident (target: minutes, not days).

Model usage by role vs. policy (policy drift equals zero).

Cost per task and per department vs. budget.

Coverage of data classification across prompts and outputs.

How tools like Aperture help

Tailscale’s Aperture (open alpha) shows how a governance gateway can tie AI usage to identity, apply centralized policies, and create consistent audit logs. It supports hosted and self-hosted endpoints, including OpenAI, Anthropic, Google Gemini, OpenRouter, and Vercel, and works with coding agents like Claude Code, Codex, and Gemini CLI. Partnerships with Oso, Cerbos, Apollo Research PBC, and Cribl help teams combine granular authorization with strong observability. Aperture is free during alpha across all plans, with pricing to come at broader release.

Common pitfalls and how to avoid them

Shadow AI: block unknown endpoints; provide a fast path to approve new tools.

Overblocking: start with clear allowlists and progressive restrictions; monitor user impact.

Missing service-account ownership: require owners, rotation schedules, and offboarding steps.

Audit gaps: verify end-to-end logging from request to response; test with drills.

Brittle rules: version control policies, test in staging, and roll out gradually.

Privacy concerns: minimize and redact logs; define retention aligned with legal needs.

Security and compliance checklist

Identity mapped to every AI request (user or service account).

Central policies enforced at a single gateway.

Consistent logs with redaction, retention, and export to SIEM.

Data classification and DLP for prompts and outputs.

Model allowlist, geofencing, and token budgets.

Regular training, policy reviews, and incident exercises.

The fastest path to safe productivity is simple: connect people and agents to models through a single identity-aware gateway, apply clear policies, and watch the results. With identity-linked AI governance for enterprises, you reduce data risk, speed audits, and keep teams moving without guesswork.

(p)(Source:

https://siliconangle.com/2026/02/17/secure-networking-startup-tailscale-launches-identity-linked-governance-ai-tools-agents/)(/p)

(p)For more news:

Click Here(/p)

FAQ

Q: What is identity-linked AI governance for enterprises?

A: Identity-linked AI governance for enterprises ties every AI request to a verified user or service account, applies consistent policies, and records a full audit trail. It reduces data leaks, speeds approvals, and helps meet compliance needs while keeping teams productive.

Q: Why is identity-linked AI governance important for enterprise security and compliance?

A: Many companies face blind spots because IT teams and users often don’t know who or what is sending data to third-party models, increasing leakage risk. Studies in the article show about 34.8% of corporate data fed to AI is sensitive and 48% of workers upload sensitive company data into public tools, so identity-linked governance provides clear attribution and reliable logs for audits.

Q: What are the core building blocks of identity-linked AI governance?

A: Core building blocks include mapping every AI call to a real identity, centralizing policy and authorization with a policy decision point and enforcement point, and logging and monitoring prompts, parameters, requester identity, and outcomes. They also require data classification and DLP rules, routing logs to a SIEM or observability stack, and applying the same controls across hosted and self-hosted models.

Q: How do organizations map AI requests to verified identities in practice?

A: Use your identity provider (SSO) and SCIM for group sync, issue per-user and per-service API credentials instead of shared keys, bind agents and automations to service accounts with clear owners, and attach role and department metadata to each AI request. These measures ensure each request can be attributed to a person or service and support centralized logging and audits.

Q: How should logging and auditing be implemented under identity-linked AI governance?

A: Capture prompts, attachments, the model and parameters, requester identity, and outcomes while redacting secrets and personal data to keep enough context for investigations. Route logs to your SIEM or observability stack (for example via Cribl) for alerting and retention, and verify end-to-end logging from request to response with drills.

Q: What is a practical rollout plan for adopting identity-linked AI governance?

A: Adopt a phased approach: Phase 1 discover and contain by inventorying AI tools, creating a model allowlist and default-deny policy, and routing AI traffic through a control plane to attach identity and logs; Phase 2 pilot and refine with a developer team to tune rules and build dashboards; Phase 3 scale safely across functions with training and monthly policy reviews. This staged path limits disruption while improving controls, visibility, and compliance readiness.

Q: Which AI providers and agents should identity-linked governance support, and does Aperture support them?

A: Governance should cover both hosted and self-hosted endpoints and popular providers such as OpenAI, Anthropic, Google Gemini, OpenRouter, and Vercel, along with coding agents and CLIs like Claude Code, Codex, and Gemini CLI. Tools like Tailscale’s Aperture in open alpha help implement identity-linked AI governance for enterprises by supporting these endpoints and tying usage to identity with centralized policies and logs.

Q: What metrics should organizations track to measure the success of identity-linked AI governance?

A: Key metrics include the percent of AI requests with verified identity (target: 100%), data exfiltration attempts blocked versus allowed, mean time to investigate AI incidents (target: minutes), and model usage by role versus policy to detect drift. Organizations should also track cost per task or department and coverage of data classification across prompts and outputs to measure efficiency and residual risk.