AI News

27 Feb 2026

Read 8 min

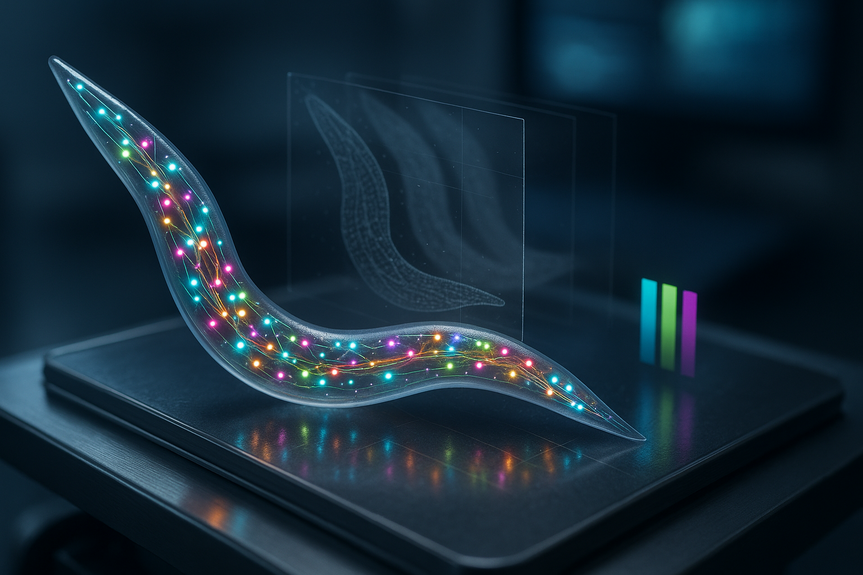

How to track neurons in moving animals 600x faster

Track neurons in moving animals with AI that aligns and labels cells in seconds, saving weeks of work.

How AI helps track neurons in moving animals

BrainAlignNet: fast, pixel-precise alignment

BrainAlignNet solves the “where did that cell go?” problem. It registers pairs of images even when the head of a roundworm bends or a jellyfish deforms. It runs about 600 times faster than older pipelines. Tests show 99.6% accuracy at the single-pixel level. The team used it in C. elegans and also in the jellyfish Clytia hemisphaerica, which twists and stretches in many ways.AutoCellLabeler: naming neurons on the fly

AutoCellLabeler answers “which neuron is this?” It learns from human-labeled examples and from multi-color genetic tags like NeuroPAL. It identified over 100 neuron types in the worm head at 98% accuracy. It still worked well with fewer colors, cutting the need for perfect staining. What once took trained staff up to five hours per animal now runs in seconds.CellDiscoveryNet: unsupervised cell discovery

CellDiscoveryNet goes further. It compares data across many animals and clusters cells into types without any human labels. In tests, it matched expert performance at identifying more than 100 neuron types. This helps when labels are missing, dyes vary, or you want to map a nervous system at scale.Why this matters for brain-and-behavior science

Neuroscientists want to link activity patterns to actions. That needs consistent cell tracking across time and across animals. These tools make that practical. – Speed: Process long videos in minutes, not months. – Accuracy: Single-pixel alignment and near-human (or better) labeling. – Scale: Apply the same pipeline across many animals and experiments. – Flexibility: Works in wiggly worms and deforming jellyfish; adapts to fewer color channels. – Cost: Replaces expensive, repetitive manual annotation with automation.From data deluge to insight

Modern microscopes flood labs with images. People often must trade speed for accuracy, or vice versa. With BrainAlignNet, AutoCellLabeler, and CellDiscoveryNet, teams can keep both. They can align, identify, and compare neurons across whole datasets while preserving the details that matter for decoding circuits.From worms to jellyfish—and beyond

The tools were built for transparent animals like C. elegans, where every neuron can glow. But the core ideas—non-rigid registration and learned cell identity—fit many imaging tasks. Researchers already used BrainAlignNet on jellyfish, where any part moves relative to any other. The same approach can help map cells in other organisms or in human tissue sections.What it takes to put this to work

Data and labels

– Multi-spectral fluorescence improves labeling, but the system can handle fewer colors. – A small set of expert labels can bootstrap AutoCellLabeler. – For cross-animal mapping without labels, use CellDiscoveryNet.Workflow basics

– Use BrainAlignNet to align frames and link cells over time. – Run AutoCellLabeler to assign neuron identities within each animal. – Apply CellDiscoveryNet to cluster and match cell types across animals.Quality checks

– Spot-check a subset against expert labels. – Track uncertainty; review low-confidence calls. – Iterate with improved training data if needed.What’s next

Teams are expanding cell labeling in jellyfish and building microscopes to image free-swimming animals. As more neurons are tagged and recorded in natural motion, these AI tools will help extract clean signals and stable identities, even when bodies bend, twist, and stretch. This study shows that you can now reliably track neurons in moving animals at scale. With pixel-level alignment, high labeling accuracy, and even unsupervised discovery, labs can bridge brain activity to behavior faster and more affordably than before.(Source: https://neurosciencenews.com/ai-neuron-tracking-movement-30183/)

For more news: Click Here

FAQ

Contents