Managing AI coding in open-source reduces bugs and licensing risk while improving contributor speed

Managing AI coding in open-source needs clear rules, good tools, and steady reviews. This guide shows how to use code assistants to move faster while keeping licenses clean, security tight, and maintainers sane. Set simple guardrails, label AI changes, and track provenance so your project stays healthy and trusted.

Open-source now moves at AI speed. Code assistants write drafts, suggest tests, and explain errors. That helps new contributors join and veterans ship faster. But AI can also copy code with the wrong license, add quiet bugs, and flood maintainers with low‑quality pull requests. The path forward is not fear or hype. It is a clear policy, simple automation, and human review that focuses on value.

Why AI coding helps and hurts open source

The upside

Faster fixes and features: Assistants propose boilerplate and refactors.

Better onboarding: Tools explain code and suggest next steps.

More tests and docs: Generators draft examples and basic coverage.

Accessibility: Non-native speakers and beginners can contribute sooner.

The downside

License risk: Generated snippets may mirror non‑compatible code.

Security slips: Plausible but unsafe patterns can get in.

Maintenance load: Spammy or near‑duplicate PRs drain reviewer time.

Hidden bugs: Confident code without context or tests can mislead.

Good practices for managing AI coding in open-source reduce these risks and keep the benefits.

Managing AI coding in open-source: a practical playbook

1) Publish a simple AI use policy

Add AI.md that states what is allowed, what must be reviewed, and how to label AI-generated content.

Require a human to review and take responsibility for every AI change.

Offer examples of acceptable uses (tests, docs, refactors) and red lines (pasting unknown code).

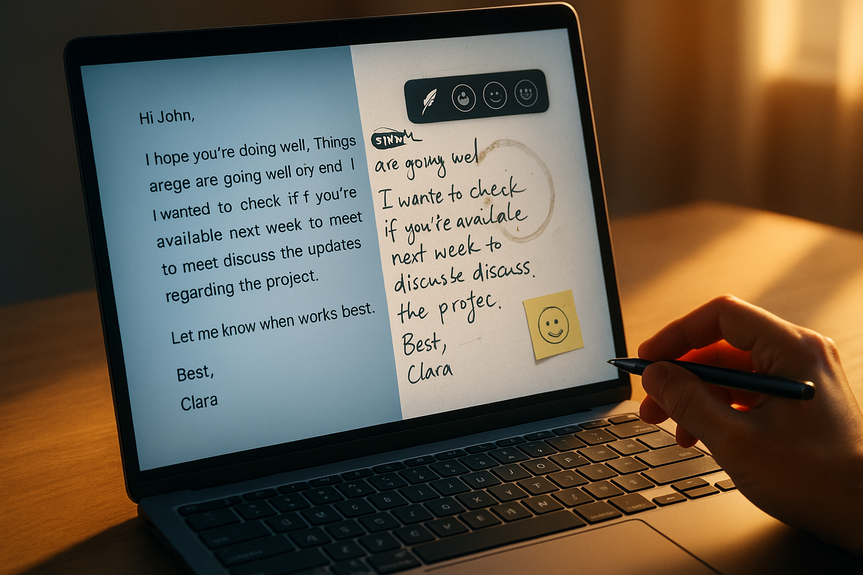

2) Update your pull request template

Checkbox: “I reviewed and understood all code I submit.”

Checkbox: “Contains tests or explains why not.”

Optional fields: “AI tools used” and “Brief prompt summary.”

Label “ai-generated” so triage and metrics stay clear.

3) Enforce small, reviewable changes

Set a soft limit on PR size and ask for split PRs.

Block PRs without tests or with failing checks.

Encourage draft PRs early to steer effort.

4) Keep license hygiene tight

Run automated license scanners on PRs and dependencies.

Forbid code pasted from unknown public gists or answers.

Document allowed sources (project code, contributor’s own code, compatible licensed code).

Use a CONTRIBUTING.md section on license compliance and attribution.

5) Strengthen security and provenance

Enable secret scanning, dependency alerts, and static analysis.

Generate an SBOM for releases and sign artifacts.

Adopt a branch protection rule: passing checks, code review, and DCO/CLA required.

Add a SECURITY.md with clear report steps and SLAs.

6) Raise the bar on quality signals

Require style checks, linters, and formatting in CI.

Set minimum test coverage for changed lines.

Add performance budgets if the project is speed‑sensitive.

Ban “TODO” without a linked issue.

7) Guide safe tool use and privacy

Do not paste secrets or private code into third‑party tools.

Prefer on‑prem or self‑hosted models for sensitive repos.

Document which files are safe to share with tools.

Encourage prompts that ask for patterns, not copy‑paste solutions.

8) Improve maintainer workflow, not just gates

Use labels and triage bots to route AI‑heavy PRs to reviewers who opt in.

Add templates that show “good PR” examples.

Schedule review rotations to avoid burnout.

Offer a “first good issue” board with clear acceptance criteria.

9) Plan legal and governance fallbacks

Require DCO sign‑off or a CLA for clear contributor intent.

Keep a simple process to revert risky merges fast.

Publish how you will handle license violations and appeals.

Protect trademarks and brand usage in docs and forks.

10) Measure and improve

Track PR acceptance rate, rework rate, time‑to‑merge, and test coverage.

Tag reverted commits to find root causes and fix policy or tooling.

Review metrics monthly; update AI.md and templates as trends shift.

Case patterns and quick wins

Quick wins this week

Add “ai-generated” and “needs-tests” labels; wire them into triage.

Ship a short AI.md and update the PR template with two checkboxes.

Turn on secret scanning, dependency alerts, and required status checks.

Enable a linter and formatter to stop style debates early.

Review checklist for AI‑assisted PRs

License: Is every new file and large snippet clearly original or compatible?

Tests: Do changes have unit/integration tests that prove behavior?

Clarity: Are variable names, comments, and commit messages human‑readable?

Security: Any unsafe patterns (injection, weak crypto, bad auth)?

Performance: Any new hot paths or extra allocations?

Contributor guidance you can paste into CONTRIBUTING.md

Use AI to draft, but submit only code you understand and can defend.

Prefer small PRs. Explain the “why,” not just the “what.”

Add tests. If you cannot, explain the plan in the PR.

State if AI tools helped and link to related issues.

Signals that your guardrails work

Fewer revert commits after merge.

Stable or rising coverage on changed lines.

Shorter review cycles for small, well‑tested PRs.

Lower duplicate or near‑duplicate submissions.

Maintainers report less time spent on low‑value triage.

Healthy open-source is human first, tool second. AI can draft, suggest, and speed up, but people must choose, test, and explain. With a short policy, clear templates, and a few strong checks, you can keep speed and trust. That is the heart of managing AI coding in open-source and safeguarding your project’s future.

(Source: https://techcrunch.com/2026/02/19/for-open-source-programs-ai-coding-tools-are-a-mixed-blessing/)

For more news: Click Here

FAQ

Q: What are the main benefits and risks of AI coding tools for open-source projects?

A: AI coding tools speed up fixes and features, improve onboarding by explaining code, and help generate tests and documentation while making projects more accessible. However, they can introduce license risk through copied snippets, create plausible but unsafe patterns, flood maintainers with low‑quality pull requests, and add hidden bugs.

Q: What should an AI use policy for an open-source project include?

A: Publish an AI.md that states what is allowed, what must be reviewed, and how to label AI-generated content, and require a human to review and take responsibility for every AI change. Offer examples of acceptable uses such as tests, docs, and refactors, and list red lines like pasting unknown code.

Q: How can pull request templates be updated to support AI-assisted contributions?

A: Add checkboxes confirming contributors reviewed and understood their submission and that changes include tests or explain why not, and include optional fields for “AI tools used” and a brief prompt summary. Label AI-generated PRs so triage and metrics remain clear.

Q: How can projects keep license hygiene tight when contributors use AI assistants?

A: Run automated license scanners on PRs and dependencies, forbid pasting code from unknown public gists or answers, and document allowed sources such as project code, a contributor’s own code, or compatibly licensed code. Include a CONTRIBUTING.md section on license compliance and attribution.

Q: What security and provenance practices should open-source projects adopt for AI-assisted changes?

A: Enable secret scanning, dependency alerts, and static analysis, generate an SBOM for releases, and sign artifacts to improve provenance. Adopt branch protection rules requiring passing checks, code review, and DCO/CLA, and publish a SECURITY.md with clear report steps and SLAs.

Q: How can maintainers avoid burnout from AI-generated or AI-heavy pull requests?

A: Use labels and triage bots to route AI-heavy PRs to reviewers who opt in, schedule review rotations, and offer a “first good issue” board with clear acceptance criteria to guide contributors. Encourage small, reviewable changes, draft PRs early, and block PRs without tests or with failing checks to reduce low-value triage.

Q: What quick wins can a project implement this week to manage AI-assisted contributions?

A: Add “ai-generated” and “needs-tests” labels wired into triage, ship a short AI.md, update the PR template with two checkboxes, and enable secret scanning, dependency alerts, and required status checks. Turn on a linter and formatter to stop style debates early.

Q: How should projects measure whether their approach to managing AI coding in open-source is effective?

A: Track metrics like PR acceptance rate, rework rate, time-to-merge, and test coverage on changed lines, and tag reverted commits to find root causes and fix policy or tooling. Review these metrics monthly and update AI.md and templates as trends shift to keep guardrails working.