AI News

01 Oct 2025

Read 18 min

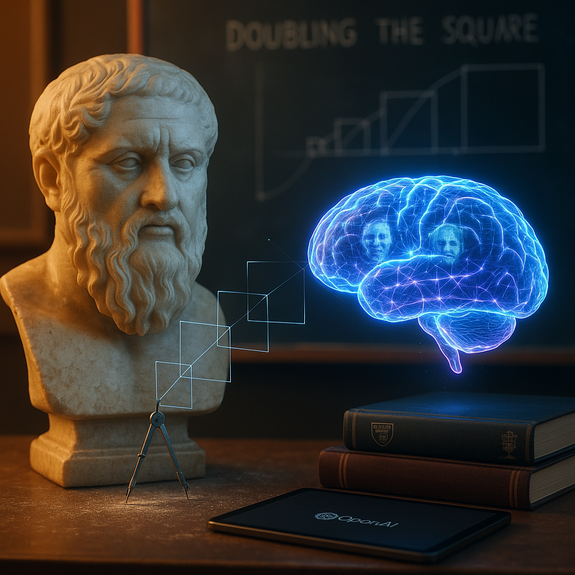

How ChatGPT mathematical reasoning study reveals AI thinking

ChatGPT mathematical reasoning study shows AI can improvise solutions, helping teachers check proofs

Inside the ChatGPT mathematical reasoning study

The ancient puzzle meets a modern chatbot

In Plato’s dialogue, Socrates asks a student to double a square’s area. The student first doubles the side length. That turns a square with side s into one with side 2s and area 4s², which is four times bigger, not two. Socrates guides the student toward the diagonal. The diagonal of the original square is s√2. A square with side s√2 has area (s√2)² = 2s². That is exactly double. The study team used this well-known result as a starting point. They argued that a text-trained model like ChatGPT might not simply memorize a rare, image-heavy geometric construction. So if the chatbot solved it correctly, it might be doing more than lookup. It could be assembling a fresh chain of ideas from the prompt. They then pushed the test further. They asked ChatGPT how to double the area of a rectangle using similar reasoning. This is where things got interesting. The chatbot claimed there was no geometric solution because you cannot use a rectangle’s diagonal the way you use a square’s. The first part is true: the rectangle’s diagonal does not solve the problem by itself. But the second part is false: a geometric solution exists. Visiting Cambridge scholar Nadav Marco and mathematics education professor Andreas Stylianides called this a “vanishingly small” claim to find in training data. That means the answer was likely invented on the fly. ChatGPT took the square idea, tried to transfer it, and then made a wrong general rule. In one sense, that is what novice learners do: they over-generalize from a known pattern and then adjust. The ChatGPT mathematical reasoning study captured that learner-like behavior, including the error.Why this matters for “AI reasoning”

The researchers do not claim ChatGPT “thinks” like a person. They do suggest its behavior can look like a student working inside the “zone of proximal development” — the space between what a learner can do alone and what they can do with guidance. With careful prompts, the model produced ideas that were not obvious copies of likely training text. With a nudge in the wrong direction, it produced a confident but incorrect claim. This behavior links to the old “black box” issue in AI. We can see outputs, but not the internal steps that lead to them. That makes verification essential, especially in math. The study’s conclusion is practical: AI can help explore, but students must learn to test the steps, critique the reasoning, and ask for clearer definitions and checks.Why the rectangle answer was wrong — and the geometry that works

The diagonal trick works for a square — not for every rectangle

For a square, the diagonal has a special role. It is longer than the side by exactly √2. That constant comes from the Pythagorean theorem: s√2. That is why using the diagonal as the new side doubles the area neatly. Rectangles do not share that simple relation. If a rectangle has sides a and b, its diagonal is √(a² + b²). The diagonal is not equal to either side times √2 unless a = b (which makes it a square). So the diagonal does not provide a general “double-area” side for rectangles.A simple, correct way to double any rectangle’s area

You can double the area of any rectangle with a straightforward geometric idea: scale the rectangle by a factor of √2 in both dimensions if you want to keep the same shape, or scale just one dimension by 2 if you do not care about shape. Here are two valid options: – Keep the shape (similar rectangle): – Original rectangle: sides a and b, area ab. – To double area while keeping the same shape, multiply both sides by √2. – New rectangle: sides a√2 and b√2, area (a√2)(b√2) = 2ab. – Change the shape (not similar): – Keep side a the same. Double side b to 2b. – New rectangle: sides a and 2b, area a(2b) = 2ab. There are classic Euclidean constructions to achieve the √2 factor using only a compass and straightedge. One easy route is to construct a right triangle whose legs are equal. If each leg is 1 unit, the hypotenuse measures √2 units. You can then use that length to scale your rectangle.A visual construction idea (compass and straightedge)

If you want a construction that preserves shape: – Draw the original rectangle with sides a and b. – On a separate line, mark a segment of length 1 and build a right triangle with both legs of length 1. The hypotenuse is length √2. – Use the hypotenuse as a unit to scale both a and b. With a compass, transfer a√2 and b√2. – Draw the new rectangle with sides a√2 and b√2. Its area is doubled and it is similar to the original. If you are fine with a shape change (simpler in practice): – Extend one side b to 2b (measure and mark). – Keep the other side a the same. – Draw the new rectangle. The area has doubled. These methods show that a rectangle’s area can be doubled by pure geometry. The mistake in the chatbot’s answer was not about the diagonal being unhelpful for rectangles; it was the leap to “no solution exists.” The ChatGPT mathematical reasoning study highlights this exact misstep.What counts as reasoning for AI?

Patterns, hypotheses, and the “learner-like” label

The model seemed to test a pattern: “Diagonal solves doubling for a square.” It then tried to apply that pattern to rectangles. When the pattern did not fit, it declared a dead end. A human teacher would pause there, ask for another path, and push toward scaling. With the right prompt, the model might have shifted to “What factor multiplies area by two?” and then found √2. This is why the researchers say the model looked “learner-like.” It explored, generalized, and erred, all within a guided conversation. But we must keep the limit in mind. ChatGPT does not have geometric intuition. It predicts the next likely phrase based on training and prompt context. The useful trick is to structure prompts that elicit testable steps, not just confident prose.How to prompt and verify in math class

Prompting that teaches, not just answers

Teachers can get more value when they frame a dialog that asks the model to explore and check. Try prompts that set goals and constraints: – “Let’s explore how to double a rectangle’s area. First, list two different methods.” – “Keep the rectangle’s shape the same. What scale factor multiplies area by two?” – “Propose a geometric construction. Then explain how to verify it.” Avoid prompts like “Just give me the final answer,” especially for geometry. You want steps you can test, not a single claim.Verification first, speed second

Even when a solution looks right, build in checks: – Recompute areas using symbolic expressions, not only numbers. – Test with simple values (e.g., a = b = 1) to watch the scale clearly. – Ask for an alternative method and see if both methods agree on the final numbers. – If the model cites a rule, ask it to name the theorem and restate it in one sentence.Teach students to critique AI like a proof

Students can treat AI output as a draft: – Identify assumptions. Are they stated? – Check each step for validity. Does the step follow a known rule? – Look for leaps. Where does the reasoning jump from pattern to claim? – Rewrite the proof in your own words. If you cannot, you do not own it yet. These habits turn the black box into a glass box. The process becomes visible, even if the model’s internals are not.From text-only chat to richer math tools

Better tools for geometry and proofs

The study suggests new combinations: – Link a chatbot with a dynamic geometry system (DGS) so it can draw, drag, and test figures on screen. – Pair it with a theorem prover to check each step for logical soundness. – Add a numeric sandbox where the model can run quick calculations on chosen test values. Such a setup would let students see and test ideas at the same time. It would reduce the risk of smooth but wrong explanations.Why multimodal models could help

Because much of geometry is visual, models that handle text and images may reason better about shapes. If a chatbot can produce and inspect a diagram while it explains, it has more ways to catch errors. Still, the core rule stands: students must check claims against definitions, theorems, and measurements.What educators can take from the findings

New skills for the curriculum

The researchers argue for teaching students how to read, question, and refine AI-generated proofs. That means: – Understanding the difference between a correct result and a valid proof. – Knowing theorems well enough to spot misuse. – Applying counterexamples to test claims. – Using precise language when asking and answering. The goal is not to ban AI, but to learn with it. The class becomes a workshop where students and AI co-create drafts, then fix them.Prompt frameworks that support learning

A simple, repeatable framework helps: – Clarify the task: “We want to double area.” – Set constraints: “Keep the rectangle similar to the original.” – Ask for two approaches: “Scaling and construction.” – Require a check: “Prove the area doubles. Show the numeric test with a = 3, b = 5.” – Reflect: “Why did the diagonal trick work for a square but not a general rectangle?” This structure keeps the model inside the zone of proximal development. It invites hypotheses and validation in a tidy loop.Limits and promise

Do not over-interpret “thinking”

The Cambridge and Jerusalem team are careful. They do not say the model “understands” geometry. They say it behaved like a student at times, including making a wrong generalization. That is useful because it shows how prompts and feedback can guide the model toward better steps. But it also shows why human oversight is non-negotiable.Where research can go next

Future studies can: – Test newer models on a wider set of geometry and algebra problems. – Compare how models handle visual tasks before and after pairing with DGS software. – Measure how often the model invents false rules and what prompts reduce that risk. – Track learning outcomes when students practice verifying AI-generated proofs. This agenda aims to move from “impressive answers” to “reliable methods” that teachers can trust. The core lesson is straightforward. ChatGPT can propose ideas that look fresh, and it can make errors with the same confidence. The ChatGPT mathematical reasoning study shows both sides at once: improvisation under guidance, and the need for checks at every step. If teachers and students learn to prompt, test, and revise, AI can become a helpful partner in math learning. In short, the ancient puzzle still teaches a modern truth. Good reasoning needs clear steps and proof. AI can join the process, but it cannot replace it. With the habits above, the benefits outweigh the risks — and the next chapter of classroom math will be more hands-on, more visual, and more rigorous. That is the lasting value of the ChatGPT mathematical reasoning study.For more news: Click Here

FAQ

Contents