Microsoft Copilot confidential email leak highlights gaps, use our checklist to lock down mail now

Microsoft confirmed a bug that let Copilot Chat pull summaries from Drafts and Sent Items, even when emails were labeled confidential. The Microsoft Copilot confidential email leak did not expose data to new people, but it broke trust. Here is what happened, and simple steps you can take to secure data now.

Microsoft says a recent Copilot Chat issue caused summaries of messages in Outlook’s Drafts and Sent folders to appear, even when those emails had a confidential label and data loss prevention rules. The company rolled out a configuration fix and said access controls were not bypassed. Still, experts warn that fast AI rollouts can create safety gaps. A notice shared on an NHS support dashboard tied the root cause to a “code issue,” and reports suggest Microsoft learned of the problem in January.

What went wrong in the Microsoft Copilot confidential email leak

How Copilot Chat works in Outlook and Teams

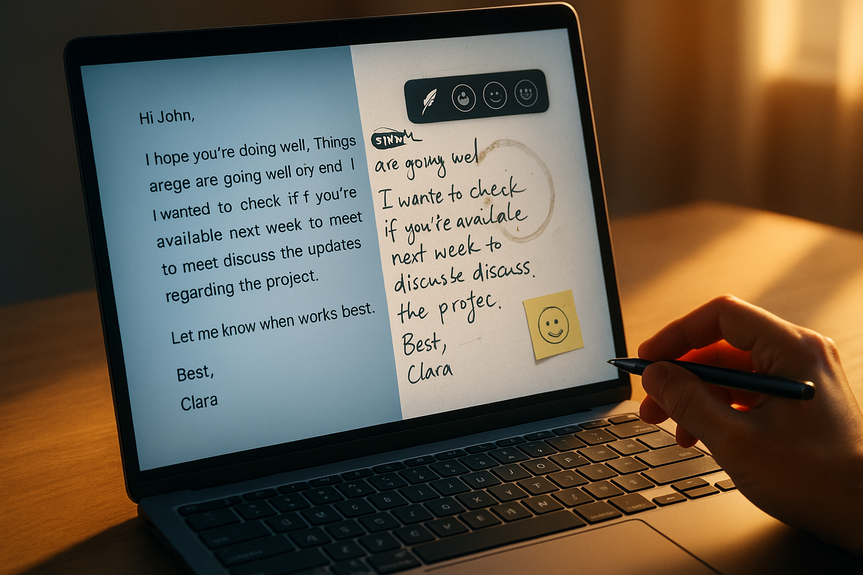

Copilot Chat answers questions and creates summaries based on the data a user can already access. It pulls context from emails, chats, and files to save time. In this case, the tool included content from Drafts and Sent Items.

Where protections faltered

Sensitivity labels and data loss prevention (DLP) aim to stop sharing of protected content. The issue caused Copilot to summarize labeled emails anyway. Microsoft says no one gained new access, but the behavior did not match the intended design to exclude protected content.

Who was impacted

The problem affected some enterprise users. An NHS notice indicated awareness of the bug but said patient information was not exposed. Draft and sent emails remained tied to their creators. The fix has now been deployed worldwide, according to Microsoft.

Immediate steps to reduce risk today

Lock down access and scope

Make AI features opt-in for high-risk teams (legal, HR, finance, healthcare).

Limit Copilot to a pilot group while you validate controls and logging.

Review who can use the “work” tab and what sources it can summarize.

Verify labels and DLP actually bite

Update Microsoft 365 apps and confirm the Copilot fix is applied.

Run safe tests: ask Copilot to summarize labeled emails and verify it refuses.

Use auto-labeling policies for known sensitive data (PII, health, financial).

Tighten identity and device posture

Require multifactor authentication and compliant devices for Copilot use.

Block access from shared or unmanaged endpoints.

Disable Copilot in tenants or apps where governance is not ready.

Increase oversight

Turn on auditing. Review logs for unusual Copilot prompts and responses.

Set alerts for access to confidential content, even if by the original author.

Establish a fast “kill switch” to pause Copilot if you see drift or bugs.

Hardening Microsoft 365 for AI assistants

Strengthen data foundations

Build a clear data map. Know where confidential content lives.

Apply default sensitivity labels to mailboxes and shared workspaces.

Use retention policies to reduce how long sensitive drafts sit in mailboxes.

Reduce exposure by design

Adopt private-by-default settings. Opt-in to new AI features, not opt-out.

Restrict connectors and plugins that widen Copilot’s data reach.

Limit Copilot to non-sensitive repositories until controls are proven.

Monitor, test, and respond

Continuously test Copilot with red-team style prompts that try to bypass labels.

Log prompt/response metadata. Watch for summaries of protected items.

Prepare an incident playbook specific to AI output and data leakage.

Governance lessons from the Microsoft Copilot confidential email leak

Speed without guardrails invites errors. Stage rollouts and require approvals.

Treat AI features like a data integration, not a chatbot. Model its blast radius.

Make “no sensitive data” the default until validation proves safe handling.

Communicate clearly with users: what Copilot can see, and what it must not.

Keep a clear change log. New AI capabilities should pass governance review.

How to talk to your teams

Clear rules for safe prompts

Do not ask Copilot to summarize or share sensitive content.

Use labels correctly. Report any odd behavior right away.

When in doubt, handle confidential items without AI assistance.

Build trust through transparency

Explain what changed, what was fixed, and where limits remain.

Share test results that show protections working after the fix.

Invite feedback from risk owners and frontline users.

This event is a warning, not a reason to panic. AI assistants can help, but data safeguards must lead. Validate labels, tighten access, and monitor results. If you move with care, you can gain the speed of Copilot without risking another Microsoft Copilot confidential email leak.

(Source: https://www.bbc.com/news/articles/c8jxevd8mdyo)

For more news: Click Here

FAQ

Q: What happened in the Microsoft Copilot confidential email leak?

A: Microsoft confirmed a bug in Microsoft 365 Copilot Chat that caused the tool to return summaries of emails stored in users’ Drafts and Sent Items, including messages labelled confidential. The company deployed a configuration update worldwide and said access controls and data protection policies remained intact.

Q: How does Copilot Chat normally work within Outlook and Teams?

A: Copilot Chat pulls context from emails, chats and files that a user can already access to answer questions and create summaries. In this incident it also summarised content from Drafts and Sent Items, even when messages had sensitivity labels.

Q: Did the bug expose confidential messages to new people?

A: Microsoft said it “did not provide anyone access to information they weren’t already authorised to see” and that the behaviour did not match its intended Copilot experience. An NHS support notice and Microsoft statements indicated that draft and sent emails remained with their creators and that patient information was not exposed.

Q: Who was affected by the Copilot Chat issue?

A: The problem affected some enterprise customers and was flagged on an NHS IT support dashboard, with reports suggesting Microsoft first became aware of the error in January. Microsoft said the configuration update has been deployed worldwide for enterprise customers.

Q: What immediate steps can organisations take to reduce risk now?

A: Organisations should make AI features opt-in for high-risk teams, limit Copilot to a pilot group, and review who can use the work tab and what sources it can summarise. They should also update Microsoft 365 apps, run safe tests to confirm labelled emails are refused, require multifactor authentication and compliant devices, enable auditing and alerts, and have a fast “kill switch” to pause Copilot if needed.

Q: How can organisations verify sensitivity labels and DLP work with Copilot?

A: Update Microsoft 365 apps to ensure the Copilot configuration fix is applied and run safe tests by asking Copilot to summarise labelled emails and verifying it refuses. Use auto-labeling policies for known sensitive data and review results before wider rollout.

Q: What governance lessons emerged from the Microsoft Copilot confidential email leak?

A: The incident highlighted that speed without guardrails invites errors and that AI features should be staged with approvals, treated like a data integration, and set private-by-default until protections are proven. Organisations should also keep clear change logs and communicate to users what Copilot can and cannot access.

Q: How should teams be advised to interact with Copilot after the fix?

A: Teams should not ask Copilot to summarise or share sensitive content, should use sensitivity labels correctly, and should report any odd behaviour immediately. When in doubt, handle confidential items without AI assistance and share test results that demonstrate protections are working to rebuild trust.